State of the art in benefit–risk analysis: Environmental health

| [show] This page is a nugget.

The page identifier is Op_en5545 |

|---|

This page (including the files available for download at the bottom of this page) contains a draft version of a manuscript, whose final version is published and is available in the Food and Chemical Toxicology 50 (2012) 40–55. If referring to this text in scientific or other official papers, please refer to the published final version as: M.V. Pohjola, O. Leino, V. Kollanus, J.T. Tuomisto, H. Gunnlaugsdóttir, F. Holm, N. Kalogeras, J.M. Luteijn, S.H. Magnússon, G. Odekerken, M.J. Tijhuis, Ø. Ueland, B.C. White, H. Verhagen: State of the art in benefit–risk analysis: Environmental health. Food and Chemical Toxicology 50 (2012) 40–55, doi:10.1016/j.fct.2011.06.004 .

The version history of making the manuscript can be found from Heande (password protected).

Title

Editing State of the art in benefit–risk analysis: Environmental health

Authors and contact information

- M.V. Pohjola, corresponding author

- (National Institute for Health and Welfare, Finland)

- (Tel.: +358 17 201479; fax: +358 17 201480, E-mail: mikko.pohjola@thl.fi)

- S.H. Magnússon

- (Matís, Icelandic Food and Biotech R&D, Iceland)

- N. Kalogeras

- (Maastricht University, School of Business and Economics, The Netherlands)

- G. Odekerken-Schröder

- (Maastricht University, School of Business and Economics, The Netherlands)

- H. Gunnlaugsdόttir

- (Matís, Icelandic Food and Biotech R&D, Iceland)

- F. Holm

- (FoodGroup Denmark & Nordic NutriScience, Denmark)

- O. Leino

- (National Institute for Health and Welfare, Finland)

- V. Kollanus

- (National Institute for Health and Welfare, Finland)

- J.M. Luteijn

- (University of Ulster, School of Nursing, United Kindom)

- M.J. Tijhuis

- (Maastricht University, School of Business and Economics, The Netherlands)

- (National Institute for Public Health and the Environment, The Netherlands)

- J.T. Tuomisto

- (National Institute for Health and Welfare, Finland)

- Ø. Ueland

- (Nofima, Norway)

- B.C. White

- (University of Ulster, Department of Pharmacy & Pharmaceutical Sciences, School of Biomedical Sciences, Northen Ireland, United Kindom)

- H. Verhagen

- (National Institute for Public Health and the Environment, The Netherlands)

- (Maastricht University, NUTRIM School for Nutrition, Toxicology and Metabolism, The Netherlands)

- (University of Ulster, Northern Ireland Centre for Food and Health, Northern Ireland, United Kindom)

Article info

Article history: Available online 12 June 2011

Abstract

Environmental health assessment covers a broad area: virtually all systematic analysis to support decision making on issues relevant to environment and health. Consequently, various different approaches have been developed and applied for different needs within the broad field. In this paper we explore the plurality of approaches and attempt to reveal the state-of-the-art in environmental health assessment by characterizing and explicating the similarities and differences between them. A diverse, yet concise, set of approaches to environmental health assessment is analyzed in terms of nine attributes: purpose, problem owner, question, answer, process, use, interaction, performance and establishment. The conclusions of the analysis underline the multitude and complexity of issues in environmental health assessment as well as the variety of perspectives taken to address them. In response to the challenges, a tendency towards developing and applying more inclusive, pragmatic and integrative approaches can be identified. The most interesting aspects of environmental health assessment are found among these emerging approaches: (a) increasing engagement between assessment and management as well as stakeholders, (b) strive for framing assessments according to specific practical policy needs, (c) integration of multiple benefits and risks, as well as (d) explicit incorporation of both scientific facts and value statements in assessment. However, such approaches are yet to become established, and many contemporary mainstream environmental health assessment practices can still be characterized as relatively traditional risk assessment.

Keywords

Environmental health, Benefit–risk assessment, Impact assessment, Integrated assessment

Introduction

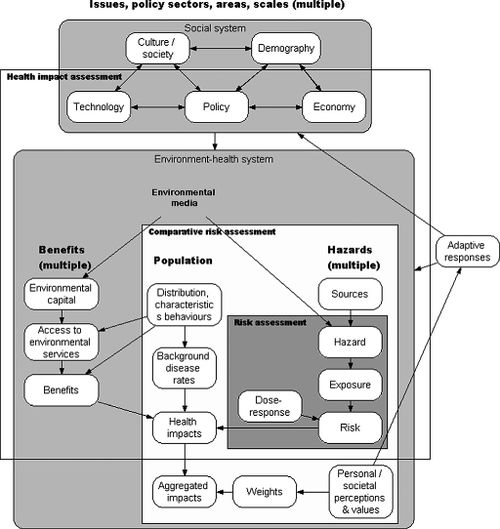

The term ‘‘environmental health assessment’’ covers a broad area. In principle, all endeavors of systematic analysis aiming to support decision making on all issues relevant to the relationships between environment and human health could be considered environmental health assessments. Given this breadth and complexity, it is not surprising that a diverse range of approaches building on different grounds and addressing different needs within the field have evolved. They all share the basic idea of applying sciencebased means and methods for producing knowledge to support decision making on societally relevant issues. Due to differences in emphasis, scope, theoretical basis and context of development and application, the basic idea becomes manifested in different ways in each approach. Fig. 1 illustrates the complexity of environmental health field and the domains, as well as limitations, of certain assessment approaches. As can be seen, some approaches focus on risks only, while others consider benefits as well. Approaches also differ in terms of what risks and/or benefits are included for explicit consideration and comparison in an assessment.

This paper reviews a concise set of approaches to environmental health assessment. It does not attempt to be an exhaustive review of all relevant approaches in the field, but an overview of a sufficiently extensive and diverse set of existing approaches so that the plurality of views, as well as the essential characteristics present in contemporary approaches to environmental health assessment, can be explicated. The summary of the overview results provides a general description of contemporary practices and a basis for conclusions on the most essential aspects of environmental health assessment in terms of contemporary and future benefit– risk analysis, within environmental health as well as other domains.

Framework for analyzing approaches to environmental health assessment

The set of approaches selected for the overview is intended to be extensive, diverse, yet concise, in order to be sufficiently representative of the field of environmental health assessment, but still analyzable. The final composition of the set of approaches results from a process of reasoning by the authors, and is based on prior knowledge and experience in the field of environmental health. The guiding principles in choosing approaches were that all the main areas and aspects of environmental health assessment should be covered, but only approaches significantly adding to the diversity of the set were included in the overview.

The final set includes approaches that identify themselves as risk assessment, impact assessment or integrated assessment. Some of the included approaches have been explicitly developed to serve the needs of regulatory work, while some build more on the tradition of academic research. The approaches also vary significantly in terms of novelty and establishment. As different interpretations on the essence of many of the chosen approaches exist, we have tried to pick the hallmark examples of each. For the sake of transparency and clarity, only as few sources of information as possible have been chosen as the basis for describing and characterizing each approach.

We created a framework for the analysis in order to guarantee a consistent scrutiny across the set of approaches, and to produce comparable characterizations. The basic structure and the attributes of the framework are adapted from the PSSP (purpose, structure, state, performance) ontology (Pohjola 2003, 2006) developed originally in the context of process design. The attributes address the way each approach frames its purpose, issues of interest, assessment practice, linkage with use, as well as goodness of the assessment process and product. The attributes are presented and briefly explained in Table 1.

| Attribute | Explanation |

|---|---|

| Purpose | What need(s) does an assessment address? |

| Problem owner | Who has the intent or responsibility to conduct the assessment? |

| Question | What are the questions addressed in the assessment? Which issues are considered? |

| Answer | What kind of information is produced to answer the questions? |

| Process | What is characteristic to the assessment process? |

| Use | What are the results used for? Who are the users? |

| Interaction | What is the primary model of interaction between assessment and using its products? (see Table 2 for options) |

| Performance | What is the basis for evaluating the goodness of the assessment and its outcomes? |

| Establishment | Is the approach well recognized? Is it influential? Is it broadly applied? |

The characterizations of the different assessment approaches are

in the form of freely formatted textual expressions and graphical

illustrations, and are primarily based on one or two selected sources

of information – books, scientific articles or websites. In cases where

these sources do not contain sufficient or explicit descriptions of the

characteristics of the approach, additional information sources or

author’s own interpretations are used as complementary material.

In particular the characterizations of interaction, performance,

and establishment often include author judgments informed by

the source material and authors’ experience within the field.

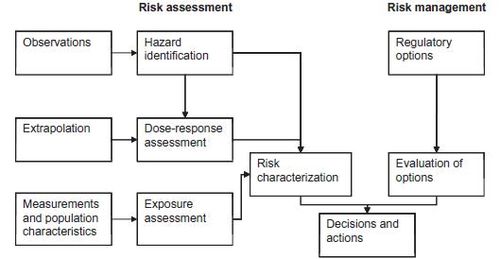

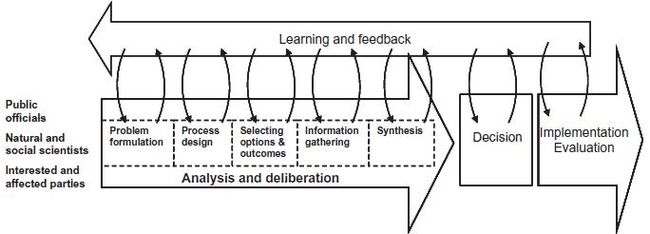

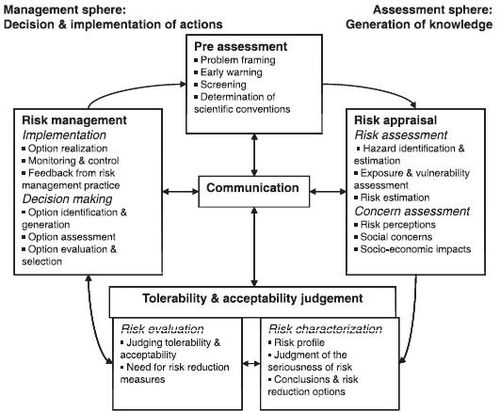

All process diagrams (Figs. 2–9) have been taken from the primary information sources and modified into the same format, still maintaining their original characteristics. In these diagrams the process of doing work in a part of an assessment is described as either a thin-border box or a white, bulky arrow. The products of this work (often reports of some kind) are described as thickborder boxes. Information flows between work processes are described with thin solid arrows. Unlike Figs. 2–9 which are process diagrams, Fig. 1 is an influence diagram. It describes real-world phenomena (white nodes) and their causal connections (thin arrows).

The categorization of the models of interaction is an adaptation of a categorization for models of linking knowledge and action developed by van Kerkhoff and Lebel (2006) in the context of sustainable development. The adapted categories of interaction are presented and briefly explained in Table 2. The categories are perceived to form a continuum of increasing engagement and power sharing when moving from trickle-down towards learning.

| Linkage | Explanation |

|---|---|

| Trickle-down | Assessor’s responsibility ends at publication of results. Good results are assumed to be taken up by users without additional efforts. |

| Transfer and translate | One-way transfer and adaptation of results to meet assumed needs and capabilities of assumed users. |

| Participation | Individual or small-group level engagement on specific topics or issues. Participants have some power to define assessment problems. |

| Integration | Organization-level engagement. Shared agendas, aims and problem definition among assessors and users. |

| Negotiation | Strong engagement on different levels, interaction an ongoing process. Assessment information as one of the inputs to guide action. |

| Learning | Strong engagement on different levels, interaction an ongoing process. Assessors and users share learning experiences and implement them in their respective contexts. Learning in itself a valued goal. |

The main characteristics of the selected assessment approaches

are summarized and combined for comparison and synthesis. The

purpose of the overview is not to rank the different approaches, but

to highlight the essential similarities and differences between

them, and to represent the plurality of views on environmental

health assessment. Some of the most interesting findings are also

taken for a further scrutiny. Finally, conclusions regarding the aspects

of environmental health assessment that other fields of

assessment, e.g. food benefit–risk assessment, could benefit taking

account of are drawn based on the overview summary.

Approaches to environmental health assessment

This chapter contains the characterizations of eight different approaches to environmental health assessment: Red Book risk assessment (NRC, 1983), Understanding risk (NRC, 1996), IRGC (International Risk Governance Council) risk governance framework (IRGC, 2005), Chemical risk assessment: REACH (Registration, evaluation, authorization, and restriction of chemical substances) (ECHA 2008a), Environmental impact assessment (EIA): YVA (Ympäristövaikutusten arviointi, Finnish for EIA) (Act on Environmental Impact Assessment Procedure 468/1994, revised 267/1999 and 458/2006; EIA Decree 794/1994, revised 268/1999 and 713/2006), Health impact assessment (WHO, 1999), Integrated environmental health impact assessment (Briggs, 2008), and Open assessment (Opasnet website; Tuomisto and Pohjola (Eds.), 2007). The main characteristics of all approaches are summarized in Tables 3a and 3b and discussed in chapter 4.

Red Book risk assessment

In 1983, the Committee on the Institutional Means for Assessment of Risk to Public Health in the National Research Council (NRC) in the United States of America (USA) published a report commonly referred to as the ‘‘Red Book’’, which explored the intricate relations between science and policy in assessing adverse health effects associated with human exposure to toxic substances (NRC, 1983). This description of a systematic process that separates risk assessment from policy making and unifies the risk assessment guidelines for all regulatory agencies can be considered as the cornerstone of contemporary risk assessment.

The purpose of a risk assessment is to characterize the potential adverse health effects of environmental hazards and the uncertainties related to the assessment. The assessment is produced to serve the needs of risk management, i.e. the process of evaluating alternative regulatory actions and selecting among them. Risk management is an agency decision making process that entails consideration of political, social, economic, and engineering information to develop, analyze, and compare regulatory options and to select the appropriate regulatory response to a potential chronic health hazard. Risk assessment and risk management are considered as independent entities. The problem owners in risk assessment process are the scientific experts dealing with the issue. Respectively, the decision making problems in risk management are owned by the public officials in the agencies responsible for dealing with the particular issue.

Risk assessment aims to answer the question: what is the estimated incidence of an adverse effect in a given population? As an answer, the assessment provides an estimate of the risk.

The risk assessment process (Fig. 2) consists of four steps: hazard identification (does the agent cause an adverse effect?), dose– response assessment (what is the relationship between dose and incidence in humans?), exposure assessment (what exposures are currently experienced or anticipated under different conditions?), and risk characterization, which summarizes the results of the previous steps. Risk assessment is considered to be a strictly scientific process conducted by experts, and it should be separated from the decision making and risk management to safeguard the objectivity and credibility of the assessment.

Risk assessment results are used in a federal agency policy decision making process. In the risk management process, agency decisions and actions are taken based on the risk estimates considered together with information on regulatory options and their potential public health, economic, social, and political consequences. In principle, the risk management addresses the question: ‘‘which regulatory option regarding the risk should be chosen?’’.

The model of interaction is best described as transfer and translate. Results of an assessment are intended and targeted for a predefined need, but there is no interaction between the assessment process and use, not to mention stakeholders, besides transferring of the risk assessment results to the risk management process. The performance of risk assessment is evaluated based on an uncertainty analysis of the estimates produced in the risk characterization step. Goodness of the decisions made based on the risk estimates is an issue belonging to the risk management process and it is not considered as an aspect of assessment performance.

The Red Book approach is the cornerstone of nearly all contemporary risk assessment related practices. Despite its several recognized limitations, practical implementations of the Red Book approach can be seen everywhere where risk assessment is being practiced. The approach is undoubtedly established and forms the core of most subsequently developed risk and related assessment approaches. For example, a good account of application of the Red Book principles in food and nutrition risk assessment is given in Tijhuis et al. (this issue).

Understanding risk

In 1996, the Committee on Risk Characterization of the National Research Council (NRC) in USA published a report ‘‘Understanding Risk: Informing Decisions in a Democratic Society’’ (NRC, 1996). This report can be considered as a follow-up on the Red Book assessment framework (NRC, 1983). It focuses on re-interpreting risk characterization as an analytic-deliberative process between public officials, scientists, and stakeholders.

The purpose of the analytic-deliberative process is to improve decision making upon risks. The essential role of risk characterization is to integrate risk assessment (understanding) and risk management (action) into one risk decision process, and thereby enhance practical understanding of risks and their management options. The application area of the approach is not limited as such, but in the report the Committee positioned their considerations explicitly within the field of governmental and industry level risk management, particularly in the context of the USA. The problem owners are the public officials with a legislative mandate to protect the public health.

Risk characterization is considered as a decision-driven activity, where a diagnosis of the decision situation is needed already in a problem formulation stage. The questions addressed may be related to many different kinds of risk-related issues, for example regulating industrial processes; setting emissions standards; taxing emissions and effluents; establishing cancer potency values; informing individuals at risk; improving risk analysis techniques (e.g. selection of default assumptions) or guidelines for making inferences from data; or creating policy strategies or implementation. The questions asked in different cases may be formulated in different ways. As answers, the analytic-deliberative process synthesizes information gathered and interpreted concerning the decision options chosen for consideration. No complete agreement or single solution is required, or even expected, to be achieved.

Analytic-deliberative risk characterization is an iterative process of problem framing, process design, option and outcome selection, information gathering, and synthesis, which ultimately leads to a decision by the responsible actors (Fig. 3). The analytic-deliberative process also extends to implementation and evaluation of the decision made.

The public officials with a legislative mandate to protect the public use the assessment results in their decision making. The model of interaction is best described as negotiation. The analytic- deliberative process is an on-going process between public officials, experts, and other stakeholders that takes learning and feedback into account. The actual decision making and use of produced information, however, takes place outside the analyticdeliberative process, and the results of risk characterization are considered as only one of the inputs into the decision making.

Because the analytic-deliberative process extends to the implementation and evaluation of the decision made, the performance of the assessment process is addressed in terms of the goodness of implemented decisions. As the name, analytic-deliberative process implies, evaluation is based on both analytical data and interpretation. On the other hand, the analytic-deliberative process is, in itself, a process of interpreting the quality of knowledge obtained by scientific risk assessment and the deliberating the implications of the knowledge quality to decision making regarding the specific issues.

The report ‘‘Understanding risk’’ has gained considerable recognitionamongprofessionals working in the related fields, particularly in the USA. On the other hand, despite that the approach builds on the cornerstone of the contemporary risk assessment (the Red Book approach), broad scale implementation of the analytic-deliberative process as described by the report is rare, and the establishment of the approach can not be considered very strong. However, it can be considered to have significantly influenced the development and implementation of several subsequently developed risk assessment, risk management and related approaches and practices.

IRGC risk governance framework

The International Risk Governance Council (IRGC), founded in 2003, is a private, independent, not-for-profit Foundation based in Geneva, Switzerland. Its mission is to support governments, industry, non-governmental organizations (NGOs) and other organizations in their efforts to deal with major and global risks facing society and to foster public confidence in risk governance. The IRGC risk governance framework was published in an IRGC white paper in 2005 (IRGC, 2005). The white paper intends to provide an integrated, holistic and structured approach, a framework, by which risk issues and the governance processes and structures pertaining to them can be investigated.

The purpose of the risk governance framework is to integrate scientific, economic, social and cultural aspects and include the effective engagement of stakeholders. The framework reflects IRGC’s own priorities, which are the improvement of risk governance strategies for risks with international implications and which have the potential to harm human health and safety, the economy, the environment, and/or the fabric of society at large. It particularly emphasizes dealing with so called ‘systemic risks’ (OECD, 2003). Furthermore, it places most attention on risk areas of global relevance (i.e. transboundary, international and ubiquitous risks) which additionally include large-scale effects (including low-probability, high-consequence outcomes), require multiple stakeholder involvement, lack a superior decision-making authority, and have a potential to cause wide-ranging concerns and outrage. Depending on the issue the problem owners can be various, e.g. members of governmental bodies, scientific communities, business organizations, NGOs or the civil society.

The questions asked in the IRGC risk governance framework do not cover all risk areas but its efforts are confined to (predominantly negatively evaluated) risks that lead to physical consequences in terms of human life, health, and the natural and built environment. It also addresses impacts on financial assets, economic investments, social institutions, cultural heritage or psychological well-being as long as these impacts are associated with the physical consequences. By linking risk governance with societal context, the framework reflects the important role of risk–benefit evaluation and the need for resolving risk–risk trade-offs. The pre-assessment phase frames the issue (what risks, what boundaries, who are stakeholders, what is the capability to address the problem). Scientific risk assessment describes and quantifies the physical aspects (potential damages, probability of occurrence, cause-effect relationships, measures to tackle the problem). In contrast, concern assessment describes societal and psychological aspects (public concerns and perceptions, social response, roles of institutions, governance structures, media, stakeholder objectives and values, inequities). Characterization and evaluation look at the societal outcomes in the arena of possible actions (economic, environmental, quality of life, ethical issues, risk reduction, substitution, or compensation, stakeholder commitment). Risk management considers aspects of decision making related to the issue (responsible parties, management options, priorities, trade-offs, effectiveness of measures).

The answers to risk questions always refer to a combination of two components: the likelihood of potential consequences and the severity of consequences of human activities and/or natural events. Such consequences can be positive or negative, depending on the values that people associate with them. Investigating systemic risks goes beyond the usual agent-consequence analysis and focuses on interdependencies and spill-overs between risk clusters. IRGC’s approach puts particular emphasis on categorizing the knowledge about the cause-effect relationships considered in the assessment sphere. The risks can be categorized as simple, complex, uncertain, or ambiguous. The categorization of risks can be used as the basis for choosing risk management strategies and deciding on the appropriate level of stakeholder involvement.

The process of handling systemic risks is a holistic approach to hazard identification, risk assessment, concern assessment, tolerability/ acceptability judgments and risk management (Fig. 4). The process breaks down into three main phases: ‘pre-assessment’, ‘appraisal’, and ‘management’. A further phase, consisting of ‘characterization’ and ‘evaluation’ of risk, is placed between the appraisal and management phases and, depending on whether those charged with the assessment or those responsible for management are better equipped to perform the associated tasks, can be assigned to either of them. The risk process has ‘communication’ as a companion to all phases of addressing and handling risk and is itself of a cyclical nature. However, the clear sequence of phases and steps offered by this process is primarily a logical and functional one and will not always correspond to reality.

The concept of risk governance comprises a broad picture of risk: not only does it include what has been termed ‘risk management’ or ‘risk analysis’, it also looks at how risk-related decisionmaking unfolds when a range of actors is involved. Governing choices in modern societies is seen as interplay between governmental institutions, economic forces and civil society actors (such as NGOs).

The intended use of the framework or assessments conducted according to its principles has not been explicitly specified. In principle, the range of users can be as broad as the range of problem owners, but particularly those with the power to influence and manage systemic risks in a global context.

The model of interaction is best described as participation. Multiple stakeholders and different aspects of risk are integrated into a single framework. The framework does, however, build on relatively sharp demarcations between expert-driven risk assessment practices, decision maker-driven risk management practices, and distinct practices of stakeholder involvement.

Performance is evaluated separately for the assessment sphere and the management sphere. The state and quality of the knowledge applied in the risk assessment is evaluated in terms of complexity, uncertainty, and ambiguity, and the results of the evaluation serve as an important input into the risk characterization and evaluation phase. In the risk management phase, performance is evaluated by a procedure adopted from the decision theory. Risk management options are generated, assessed, evaluated, selected, implemented, and monitored. The view on the risk management performance can be characterized as a checklist-type quality assurance procedure that ensures that all steps in the sequence have been given proper attention. However, the practical work is not meant to be based strictly on the sequence. Rather, it is a dynamic process where different steps are iteratively improved whenever new information and understanding becomes available.

IRGC was founded in 2003 and the risk governance framework published in 2005. Hence, the framework can be considered as a relatively novel construct. However, the framework appears to be well recognized among actors in environmental health assessment, and can be assumed to have influenced the thinking in this field. However, because the framework does not describe any specific assessment or governance practice, it is difficult to estimate to what the extent it has been applied in practice. Therefore, in terms of establishment the framework could be considered a relatively well-established theoretical construct, but not broadly applied in practice.

Chemical safety assessment: REACH

Registration, Evaluation, Authorization and Restriction of Chemicals (REACH) is a European Community Regulation on chemicals and their safe use. It aims to improve the protection of human health and the environment through better and earlier identification of the intrinsic properties of chemical substances. Under REACH, a chemical safety assessment (ECHA 2008a) is required if a substance is manufactured or imported into the European Union (EU) at 10 tons or more per year per registrant. Comprehensive description, guidance and documentation on REACH can be found from the European Chemicals Agency website (http://echa.europa.eu/).

The purpose of the assessment (Chemical Safety Assessment, CSA) is to evaluate risks arising from the manufacture and use of a substance, and to define conditions under which the manufacture and use are safe in terms of both human health and the environment. The assessment covers the manufacture, all identified uses (processing, formulation, consumption, storage, keeping, treatment, filling production of an article or any other utilization) and the life cycle stages resulting from these, and health risks are evaluated for workers, consumers, and those exposed through the environment. The novelty of REACH is that the responsibility to assess and manage the risks is placed on industry. Hence, the problem owner is the manufacturer or importer of the chemical substance.

The question asked in the assessment is whether a chemical substance poses a health or environmental hazard, and if so, what uses and use conditions can be considered safe in terms of both human health and the environment. As an answer, the substance is classified and labeled according to hazards related to its use. If a substance meets certain classification criteria in regard to the potential hazards, safe exposure scenarios and use conditions are described.

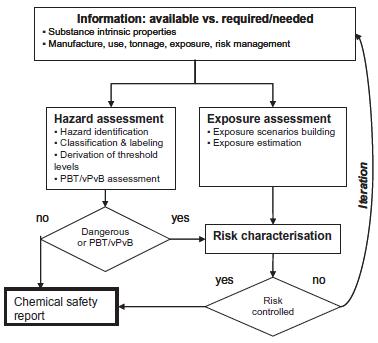

The assessment process consists of a hazard assessment, exposure assessment and risk characterization (Fig. 5). The latter two are conducted only for substances classified as dangerous (based on Directive 67/548/EEC), PBT (persistent, bioaccumulative and toxic) or vPvB (high persistency and high tendency to bioaccumulate, but not necessarily proven toxicity) in the hazard assessment. Exposure scenarios are defined and exposure estimated based on all identified uses, use conditions and life stages of the substance. Risk is characterized by comparing the estimated exposures to safe exposure levels. Risks are considered to be adequately controlled when exposures do not exceed the safe levels, or the emissions and exposures are minimized or avoided. If risks are not under control, the assessment is refined by obtaining better data on the substance properties or exposures, or changing the manufacturing or use conditions. This iterative process continues until the risks are shown to be under control, and a so-called final exposure scenario is defined. If risks can not be shown to be controlled for a specific use, and no more iterations are possible or economically viable, the chemical safety report must advise against the use of the chemical.

Assessment results are used in communicating the substance properties and safe use conditions (operational conditions and required risk management measures) to the downstream users in the supply chain. The CSA is also used as an important source of information in substance evaluations conducted by the REACH authorities. Substance evaluations are performed for all priority substances. These are substances for which there is some health or environmental concern and which meet the priority criteria developed by the REACH authorities.

The model of interaction adopted by the approach is best described as transfer and translate, although to some extent also as participation. Manufacturers and importers of the same substance are encouraged to work in collaboration when conducting the risk assessment. The assessor collects information on the exposure conditions along the supply chain, and may establish a dialog with representative customers in doing so. The downstream users also have the right to notify the assessor regarding their own uses of the substance. When the assessment is completed, the assessor is obliged to communicate the results to the downstream users.

The performance of the assessment is formally evaluated by the REACH authorities. The quality of information used has to meet the minimum information requirements. Uncertainty analysis is recommended but voluntary. The European Chemicals Agency (ECHA) performs compliance checks for a selection of registration dossiers (including the CSA). It is stated in the guidance document on dossier and substance evaluation that the percentage of checked dossiers is not to be lower than 5% of all dossiers received by the agency for each tonnage band, and that both random and nonrandom selection methods are applied. The aim of the process is to ensure that all required information is included in the dossier, and that this information is adequate. The contents of the CSA are also further reviewed by REACH authorities in case a substance evaluation is conducted.

Implementation of REACH is still in the beginning stages. Therefore, the assessment process has not yet been implemented to a large extent in practice. However, due to its regulatory status in EU, the methodology will become widely established in the following years.

Environmental impact assessment: YVA

YVA is a Finnish regulatory framework for environmental impact assessment (Act on Environmental Impact Assessment Procedure 468/1994, revised 267/1999 and 458/2006; EIA Decree 794/ 1994, revised 268/1999 and 713/2006). It is a national implementation of the European EIA Directive (Council Directive 85/337/EEC of 27 June 1985 on the assessment of the effects of certain public and private projects on the environment, amend. 97/11EC, 2003/ 35/EC and 2009/31/EC), and can therefore be here assumed to somewhat representative of the whole target area of the directive as well as the mainstream theories of EIA underlying the directive. The aim of the YVA regulation is to promote consideration of environmental issues, including environmental health issues, when deciding upon permissions and constraints for activities which may have wide societal and environmental implications. The framework emphasizes information flow and public participation in impact assessments. The main specific characteristics of YVA in regard to the European EIA Directive are its confinement to large projects (only approximately 50 assessments/year), and legal requirement for participation in 2 phases (Jantunen and Hokkanen, 2010).

The purpose of an YVA assessment is to evaluate all potential environmental impacts of a proposed large-scale project. The assessment should take into account health, environmental and social impacts as well as technical and economical issues. Problem owners are the ones with the intent to plan and execute the project and they have the legal obligation to initiate the assessment process.

The YVA process addresses questions related to potential impacts of planned projects on human and animal health and well being, environment (e.g. soil, water, air, climate, and vegetation), composition of society (e.g. building, landscape, cultural heritage) and exploitation of natural resources. Annually between 30–50 projects undergo the assessment prescribed by the EIA act in Finland (Pölönen et al., 2010). Smaller projects undergo a lighter environmental assessment procedure in the form of the environmental permit system. Answers are provided as impact estimates and a synthesis of all quantitative/qualitative information gathered concerning the potential impacts of the planned activity, possible alternative activities, and in a situation where no activity takes place.

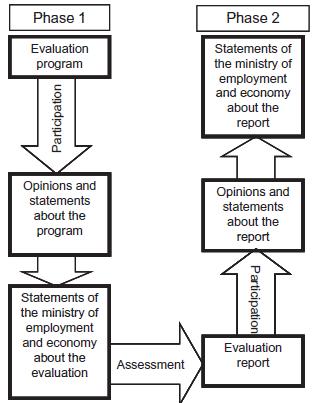

The assessment process is divided into two phases, planning of the assessment as well as execution and reporting the assessment (Fig. 6). In the planning phase, an evaluation program is constructed based on all available information on the project and potential issues related to it. Statements on the evaluation program are requested from the stakeholders by an appointed liaison authority, typically an unbiased, neutral expert from a regional environmental administration or from the ministry of employment and the economy. After this, the assessment is conducted and reported. The final evaluation report should contain detailed information on all the relevant issues, such as technical applications, land use, environmental protection, comparison of alternatives, and suggestions for a follow-up program. However, there are no specific legal requirements for the contents of the assessment. Statements on the final assessment report are again requested from the stakeholders by the liaison authority.

Information produced in the assessment is used by the public officials responsible for making the decision about permission and possible restrictions for project execution. There is no legal requirement to comply with the conclusions of the assessment, but the assessment results, including also stakeholder statements, must be taken account of in the decision making process by an authority. The model of interaction can be described on one hand as participation and as transfer and translate on the other. The procedure enables and emphasizes public participation, and there are no restrictions on who can be considered a stakeholder. All interest groups, particularly people living near the site of the planned activity, are given a possibility to state their opinions and concerns. Participation is arranged in the form of public hearings, small group discussions and public gatherings at certain phases along the assessment process. The possible impact of the stakeholder statements in the assessment and related decision making and followup is often, however, unclear. Despite the participatory approach towards the public, the interaction between YVA assessment and the related decision making has been identified to be weak (Pölönen et al., 2010).

Performance evaluation is based on quality control by the contact official. However, stakeholders can also give comments on the quality of the assessment. The framework is widely established in Finland due to its regulatory status. Similar kinds of environmental impact assessment practices also take place in all EU member states under the same EIA Directive as well as many other countries. However, due to the variation in the scale and nature of projects and the lack of legally binding requirements for the assessment contents and the use of assessment results in related decision making and follow-up, application of environmental impact assessment can vary widely. An interesting research project on the effectiveness of YVA has recently been carried out and a summary of the results have been reported by Pölönen et al. (2010).

Health impact assessment

Health, social, economic and other policies in both the public and private sectors are closely interrelated, and proposed decisions in one sector may impact objectives on other sectors. Health Impact Assessment (HIA), as proposed by the World Health Organization (WHO) (WHO, 1999, http://www.who.int/hia/en/), is a combination of procedures, methods and tools for judging the potential health effects of a policy, program or project on a population, particularly on vulnerable or disadvantaged groups. Hence, it is a tool to dynamically improve health and well-being across sectors. However, it should be noted that many people and organizations have proposed definitions for HIA, and there is no ‘correct definition’ but rather a variety of ways in which HIA can be described.

The purpose of HIA is to inform decision makers about the potential health effects of a policy, program or project, particularly on vulnerable or disadvantaged population groups, and to provide recommendations for maximizing the proposal’s positive and minimizing the negative health effects. It also aims to address inequalities in the potential health impacts and to promote joined-up working and participation. Problem owners are those with an interest or mandate to deal with the specific issue at hand, for example project managers or governmental institutes. They can thus be either those with the means to assess the issue or those with the means to make decisions upon the issue.

The ultimate question asked is of the form: ‘‘How to maximize a proposals positive health effects and minimize its negative health effects?’’ HIA is thus a positive approach as it does not only look for negative impacts. Issues addressed can be various, for example building a new road near residential areas, increasing runway and passenger capacity at an airport, common agricultural policy or a local village school policy for safer routes to school. Answer is provided by highlighting the relevant positive and negative health impacts and their distribution in the population and identifying the vulnerable population groups. Recommendations are given concerning the decision at hand. Health impact estimates can be qualitative or quantitative. In terms of quantification, WHO has strongly promoted the use of a disability adjusted life year (DALY), which is a summary measure of population health.

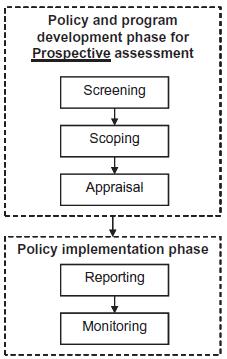

The starting point of a HIA process often is a proposal or a suggestion of making changes in an existing policy, or of launching a completely new policy or project. An assessment should preferably be conducted in early stages to better support decision making. While there is no single agreed method for undertaking HIA, a general pattern has emerged. Guidance documents often break the process into four, five or six stages (Fig. 7). Despite the differing number of stages, it is important to note that there are no large differences between the methods. The work is often done in three tiers, starting from a crude estimation of health impacts and moving onto in-depth analyzes and reviews of the impacts. In the screening phase, potential linkages between a policy, program or project and health are sought, and different aspects of health that could be affected are identified. If potential health impacts are identified, the process continues to a scoping phase. The aim of the scoping is to define the question addressed in the assessment, and to identify further information needs. Population subgroups of special interest and the degree of participation are also addressed. Once scoping is defined an appraisal phase, where health hazards are identified and evidence of impact is considered, is conducted. When moving towards policy or program implementation, HIA process continues with reporting and monitoring. Reporting aims at developing recommendations for reducing hazards and/or improving health. Finally, monitoring of the implementation of the proposal ensures that any recommendations that decisionmakers agreed to, actually occur. Stakeholders are given an opportunity to express their opinion concerning the results. Ultimately, the goal of HIA is to create a continually developing process.

The primary users of the assessment results are intended to be those who decide upon the particular policy, program, or project. They are expected to take the assessment results into consideration, to weigh the population health interest against any other interests related to the issue at hand, and to adjust the policy, program or project to maximize the positive and minimize the negative health impacts. The model of interaction is best described as participation. The assessment is mainly carried out as collaboration between scientific experts, but other stakeholders are also given an opportunity to participate in the process. HIA clearly intends to be policy-relevant and tightly bound with policy making, but as such it contains no measures for guaranteeing involvement or use of assessment results by policy makers.

Performance can be evaluated in terms of the process, its immediate impacts, and its long-term outcomes. There are four values that link the assessment to the policy environment in which it is being undertaken: democracy, equity, sustainable development, and ethical use of evidence. People are to be democratically allowed to participate in the development and implementation of policies, programs or projects that may impact on their lives. Equity rises from the idea that the assessment addresses the distribution of impacts in the population, and puts a particular emphasis on the potential effects on the vulnerable sub-groups (in terms of age, gender, ethnic background or socio-economic status). Sustainable development means that both short and long-term, as well as the obvious and less obvious, impacts are to be considered. Ethical use of evidence refers to using best available quantitative and qualitative evidence.

HIA is a well established approach, and it is high on the agenda of some governments in Europe (at national, regional and local levels) and international organizations including WHO and the World Bank. A similarly increased interest is reflected in the research field. The WHO approach to HIA, examples of its application, and international policies in its support are nicely described on the WHO website (http://www.who.int/hia/en/).

Integrated environmental health impact assessment

Integrated environmental health impact assessment (IEHIA) is an assessment approach developed in two EU funded research projects: INTARESE (Integrated Assessment of Health Risks of Environmental Stressors in Europe, 2005–2011) and HEIMTSA (Health and Environment Integrated Methodology and Toolbox for Scenario Assessment, 2007–2011). The IEHIA approach has been described in a peer-reviewed journal article by the INTARESE project coordinator, Professor David Briggs (2008). IEHIA is an approach that extends the principles of integrated assessment, developed mainly in the field of environmental policy, to issues of human health.

The purpose of IEHIA is to inform policies addressing systemic risks. These policies are more wide-ranging in scope, more collaborative and more precautionary than traditionally perceived, and address complex risks within wide social, economic, and environmental contexts. IEHIA assesses interventions affecting the environment and, subsequently, human health and well-being. Impacts can be due to traditional chemical hazards or other types of factors (e.g. traffic accidents), and can be direct or indirect in their nature. Human behaviors and perceptions, as well as personal characteristics, attitudes, and values are also considered. Assessment takes account of the complexities, interdependencies and uncertainties of the real world. Integration of issues can occur in many ways, e.g. across different sources, health outcomes, sectors of administration, geographical regions, and time. The framework builds on traditional risk assessment methods, but expands them to cover more complex, multi-sectoral issues and policies (cf. Fig. 1).

The problem owners are not explicitly listed, but it appears that the approach sees the scientists as problem owners. It is their task to identify policy needs to be addressed by means of assessment. Assessment results are intended to be of use to policy makers and they, as well as other stakeholders, are engaged already in framing of the assessment problem at hand.

Different types of questions can be asked. Diagnostic assessments aim to identify and prioritize policy actions by determining whether a problem exists, and if so, its magnitude and causes. Prognostic assessments help to choose between options by evaluating and comparing the potential implications of new policies. Summative assessments evaluate the effectiveness of existing policies, and, therefore, inform decision-making on adjustments to prevailing policies. Assessment models how interventions feed through the environment to affect health and answers are often given in the form of evaluations and comparisons of different policy scenarios. Outcomes are usually presented as impact measures. The impact indicators are selected at the issue-framing stage, and may differ substantially depending on the nature of the analysis and the stakeholders or users concerned. Indicators may be performance- based (distance from a legislative target), acceptabilityrelated (e.g. based on public opinions), health impact measures (number of mortality/morbidity cases, disability adjusted life years), or economic measures.

The assessment process is much more extensive compared with the traditional risk assessment. More attention is focused on the earlier stages of analysis in order to ensure that the issue at hand is fully defined and agreed upon, and that an appropriate form of assessment is chosen. Effort is also put into the interpretation and evaluation of the results to make sure that they are properly understood and accepted by the stakeholders involved in the process. The assessment procedure consists of four-stages: issueframing, design, execution and appraisal (Fig. 8).

The object of IEHIA is to inform policy making on systemic risks, but the approach does not explicitly specify what kind of policy processes the assessment results are intended to be used and how. However, the framework description implies that IEHIA serves in particular the needs of institutional policy making on regional, national, and EU-level issues of environmental and health relevance.

The model of interaction is best described as integration. Interaction with users and other stakeholders takes place in the issue framing and design phases of the assessment, as well as in appraisal of the assessment results. The framework acknowledges that methods enabling wider public participation in the assessment process are emerging (e.g. citizen panels and interactive websites). However, the core of the process, assessment execution, is considered to be an exclusive expert process.

The performance of an assessment is considered in terms of uncertainty of the assessment results. In the appraisal stage, the stakeholders can also express their views on the results and their implications for action. Appraisal also provides closure to the assessment process by linking the results back to the original objectives defined in the issue-framing, and thereby help to ensure the acceptance of the outcomes by the stakeholders.

The framework has been developing in the INTARESE and HEIMTSA projects since 2005. As the projects are still on-going, its application has thus far been limited to the assessment case studies conducted within these projects. In terms of establishment, IEHIA can be characterized as a novel approach that has not yet gained much popularity in the field of environmental health, and examples of its application are few. However, the framework builds and extends on a combination of well established, even traditional, approaches of risk, impact, and integrated assessment.

Open assessment

Open assessment is an approach to environmental health assessment developed in the National Institute for Health and Welfare in Finland in collaboration with multiple partners in several projects funded by the EU, the Academy of Finland, and the Finnish Funding Agency for Technology and Innovation (TEKES). The methodological foundation was addressed particularly in the EU-funded BENERIS project (Benefit–Risk Asessment of Food: An iterative Value- of-Information approach, 2006–2009). The characterization of open assessment is based on information available in the Opasnet web-workspace (http://en.opasnet.org) and a report on Open risk assessment (Tuomisto and Pohjola (Eds.), 2007). The approach has been developed in the context of environmental health, but the method is applicable in all kinds of systematic knowledge creation. The approach implements the idea of mass collaboration (Tapscott and Williams, 2007) as an open trialogical process of collective knowledge creation (Hakkarainen and Paavola, 2009; Paavola and Hakkarainen, 2009) to support decision making on issues with high societal relevance.

The primary purpose of open assessment is to improve societal decisions through generation of shared knowledge and understanding among experts, decision makers, and stakeholders. A more detailed purpose of a particular assessment is always defined according to the specific problem at hand. The problem owners in an open assessment can be anyone, for example scientific experts, political decision makers, business organizations, NGOs, or members of the civil society at large.

The formulation of assessment questions is not strictly determined, but typically they are of the form: ‘‘given the defined problem, which action should be taken?’’. In principle any issues can be addressed. The question formulated for a particular assessment should include a description of the purpose, boundaries, scenarios, and intended use(s) of the assessment. Typically, the answers provide recommendations and reasoning for certain decision/action options (or sets of options) to be taken, although the specific format of the answer depends on the assessment question. Often they include causal network descriptions of factors relevant to the outcome of interest and results of different analyses, e.g. valueof- information analysis, on parts of or the whole network. Sufficient answers to questions in open assessment often requires aggregation, weighing, and comparison of multiple risks, impacts, costs and benefits as well as explicit consideration of value statements.

The phases of open assessment process resemble those of most assessment approaches: (1) issue framing, (2) designing variables, (3) executing variables and analyses, and (4) reporting, through which the process progresses in iterative cycles. What is distinctive for open assessment is that it considers assessments as open collaborative processes of creating shared knowledge and understanding. Openness means welcoming all types of knowledge, possessed by all kinds of actors and found from all types of sources, into a systematic analysis. Exclusion of participants or inputs is allowed only based on well-argued, explicated and cogent reasons. The open process brings scientific experts, decision makers, and stakeholders to the same collaborative process. Collaboration is facilitated by the Opasnet web-workspace (http://en.opasnet.org), consisting of a wiki-interface, a modeling environment, and a database (see Fig. 9).

Both the use process and the users can be any, depending on the problem addressed, but they should be explicitly specified for each assessment. Societally important decisions are made by decision makers in policy and business, but also by lay-people in their everyday lives. The intended user may be, but is not necessarily, the same as problem owner. There can also be multiple secondary uses and users for assessment results.

The model of interaction that open assessment builds on is learning. To enhance effectiveness of assessments, it is essential that intended users are strongly engaged in the assessment process, and that their needs and capabilities are taken account of in all phases of the assessment process.

In an open collaborative process all information is continuously subject to open criticism. Performance of an assessment is evaluated in terms of (a) quality of the information produced, (b) applicability of the information in the intended use context, and (c) efficiency of the assessment process. The performance evaluation framework is used as a means for evaluating past assessments, but also, and in particular, as a means to guide design and execution of assessments. All assessment participants can rate the information produced in terms of its scientific quality and usefulness with I rating tool in the Opasnet web-workspace.

The systematic development of open assessment began in 2006. The ideas, theories and technological enablers that differentiate open assessment from the mainstream assessment approaches can all be considered emergent. Open assessment and Opasnet have been developed and tested in several assessments and research projects. They begin to be ready for a full-scale use, but experiences on broad participation are still limited. In terms of establishment, open assessment can be described as a novel, emergent approach that has not yet gained much popularity in the field of environmental health assessment, and examples of its application are still few.

Comparison of main characteristics of approaches to environmental health assessment

The main characteristics of the assessment approaches are summarized in Tables 3a, 3b and 4 below according to the attributes of the analysis framework. The variability in scope and aggregation as well as consideration of risks and benefits among the approaches is described in Table 5. Different information sources provided quite varying kinds of descriptions of different approaches and emphasized different aspects. Therefore some interpretation by authors was necessary in order to achieve complete and balanced characterizations of each approach. However, all in all the applied analysis framework can be said to have produced clear and comparable information. The characterizations are discussed below first attribute by attribute, then across the range of attributes, and finally in terms of the big picture provided by the analysis.

From Table 3a it can be observed that the purpose definitions are relatively similar across the range of approaches. The importance of supporting societally relevant decision making is addressed in every single one, although some approaches define their scope quite narrowly while others very broadly. The main differences between approaches emerge from the means for striving towards fulfillment of the stated purposes. The problem owners in different approaches vary from scientists in charge of the assessment to policy makers with a mandate to take action upon the issue at hand and onto the operators whose projects or products are being assessed. The most flexible approaches allow assessments where the problem owner can be anyone. Variation exists also in the questions asked and answers provided in assessment. While some approaches focus on estimating impacts or risks, others aim also to complement the estimates with explicit guidance on which decisions and actions to pursue and which not.

| Approach | Purpose | Problem owner | Question | Answer |

|---|---|---|---|---|

| Red Book risk assessment | Produce health risk estimates to support regulatory decisions on governmental and industry-level health risk issues | Scientists assess, policy makers in federal agencies decide | What is the estimated incidence of adverse effect caused by a substance? | Health risk estimate |

| Understanding risk | Develop understanding on practical choices regarding governmental and industry-level health risk issues | Public officials with a legislative mandate to protect the public | What is the optimal risk management decision? | (Not necessarily conclusive) synthesis of information gathered and interpreted regarding different decision options |

| IRGC risk governance framework | Improve governance of systemic risks Anyone to whom systemic risks are of relevance | What risks, effects and concerns? | Benefits vs. risks or risks vs. risks? What actions? | Estimates of chance and severity of consequences: positive or negative. |

| Chemical safety assessment: REACH | Regulatory assessment and management of health and environmental risks from the manufacture and use of chemical substances in the EU | Manufacturer or importer of a chemical substance | Does a substance pose a health or environmental hazard? What are the safe manufacture/use conditions for a potentially hazardous substance? | Classification and labeling of the substance. Acceptable manufacture/use conditions |

| Environmental impact assessment: YVA | Regulatory evaluation of potential environmental impacts of large developments to support decisions on the terms for executing the project | Project manager | What are the environmental (health and ecological), impacts of executing a planned project? | Impact estimates for (i) planned activity, (ii) possible alternative activities, (iii) no activity (obligatory) |

| Health impact assessment | Assessment of health impacts of policies, plans and programmes to (i) increase knowledge about potential impacts, (ii) inform decision-makers and affected people, (iii) facilitate adjustment of proposed policies, (iv) mitigate negative and maximize positive impacts | Health officials and professionals | What are the health impacts of a policy/plan/program? | Estimates of direct and indirect health impacts, their distribution in population, and proposals for improvement |

| Integrated environmental health impact assessment | To inform policies addressing systemic risk(s) | Scientists assess on behalf of users and stakeholders | Is there a problem, and what are its magnitude and causes? What are the potential implications of a new policy? Are existing policies effective? | Performance-based metrics and/or health impact metrics |

| Open assessment | To improve decisions on societally relevant problems regarding environment and health | Anyone | Given the defined problem(s), which action(s) should be taken? | Recommendation and reasoning for preferred decision options |

Table 3b illustrates some further differences among the approaches.

The process conceptualizations vary from strict separation

of risk assessment and management processes to intertwined

assessment and decision making. The perspectives towards public

participation and stakeholder involvement vary similarly between

limiting the assessment to exclusive expert process and complete

openness. Intended uses are in concordance with the purpose definitions,

although some approaches explicate certain specific uses

while the most flexible approaches allow for broad ranges of possible

uses. Considering the variability in process descriptions and

interaction models (Table 4) adopted, it may be observed that different

approaches adopt quite different means for attempting to serve

relatively similar uses and purposes. Furthermore, the mapping of

the interaction models in Table 4 shows that in general the approaches

are more prone to disengagement than engagement.

| Approach | Process | Use | Performance | Establishment |

|---|---|---|---|---|

| Red Book risk assessment | Risk assessment and risk management strictly separated | Policy decisions by public officials | Uncertainty of risk estimates | Cornerstone of contemporary risk assessment |

| Understanding risk | Risk assessment and management bridged with analyticdeliberative risk characterization | Policy decisions by public officials | Analytic-deliberative interpretation of the quality of knowledge and evaluation of decisions | Well recognized and influential, rarely implemented as such |

| IRGC risk governance framework | Risk governance cycle across risk assessment and management | Institutional and other decision making processes that address systemic, global risks | Quality of knowledge about hazards and risks; simple, complex, uncertain, ambiguous. Quality assurance procedure for risk management options | Framework is wellrecognized, but not easily applicable as such |

| Chemical safety assessment: REACH | Integrated risk assessment and management | Information on acceptable manufacture/use conditions for the supply-chain. Evaluation and authorization of the substance by REACH authorities | Standard information requirements for assessments. Quality control and review by REACH authorities | Recently developed, but will be widely used due to its regulatory status. Built on traditional methods |

| Environmental impact assessment: YVA | Two phase assessment process with public hearings before and after the assessment | Environmental permits. Also project planning, public awareness and political decision making. Assessment must be addressed, but not necessarily complied with, in regulatory decision-making | Quality control by the liaison authority. Statements from stakeholders, public critique | Widely used in Finland due to its regulatory status. Similar approaches common in EU and elsewhere |

| Health impact assessment | Assessment addressing both pre- and postimplementation phase | Evaluation of policies, plans and programmes by health officials and policy makers | Democracy in policy-making, equity in population, sustainable development, ethical use of evidence. Open appraisal of assessment report by public | High on the agenda of some governments and international organizations. Several examples of application exist |

| Integrated environmental health impact assessment | Integrated assessment for policy on environment and health | Primarily regional, national, or EU-level institutional decision making | Uncertainty analysis, outcomes vs. defined goals, stakeholder acceptance | Novel, but extending on well-established approaches |

| Open assessment | Collaborative knowledge creation among experts, policy makers, and stakeholders | Multiple possible uses and users depending on problems addressed. User engagement important | Evaluation of (i) quality of content, (ii) applicability, (iii) efficiency of assessments intertwined in the assessment process | Novel and building on emergent approaches, ideas, and technologies |

| Approach | Trickle-down | Transfer and translate | Participation | Integration | Negotiation | Learning |

|---|---|---|---|---|---|---|

| Red Book risk assessment | * | |||||

| Understanding risk | * | |||||

| IRGC Risk governance framework | * | |||||

| Chemical safety assessment: REACH | * | |||||

| Environmental impact assessment: YVA | * | |||||

| Health impact assessment | * | |||||

| Integrated environmental health impact assessment | * | |||||

| Open assessment d | * |

Perspectives to evaluating assessment performance also vary.

The biggest source of differences appears to be whether the goodness

of assessment is considered in terms of conforming to a rigorous

assessment procedure, the quality of assessment results, or the outcomes

of using those results. Some differences also exist in whether

performance is predominantly evaluated in qualitative or quantitative

terms. Characterizations of establishment indicate that there

are traditional, well-recognized, and broadly applied, but also emergent,

novel, and lessknownapproaches included in the overview. For

the purpose of this analysis, such diversity is valuable in order to get a grasp of the contemporary conventions, but also to identify the

directions where the field of environmental health is shifting.

By considering characterizations of different approaches across the attributes of the analysis framework, further interesting observations can be made. One is that of how the approaches vary in their consideration of risks and benefits (Table 5). Differences exist in several dimensions: (i) if only single or multiple phenomena are considered (ii) if benefits or positive impacts are considered in addition to risks and negative impacts, (iii) how estimates of positive and negative impacts are compared and/or aggregated, and (iv) which domains and respective phenomena are included (cf. Fig. 1) in scrutiny. Again, the range spans from very strict and narrow definitions of estimating risks of single substances to overarching, nearly all-embracing, approaches where health risks are considered as factors in a broader context of multiple direct and indirect risks, benefits, and impacts.

| Approach | Consideration of risks and benefits |

|---|---|

| Red Book risk assessment | Health risk estimates for single substances |

| Understanding risk | Health risk estimates from risk assessment combined with any other information brought up in deliberation |

| IRGC risk governance framework | Risk–benefit and risk–risk comparisons regarding risks related to physical impacts affecting human health and safety, economy, environment, and/or the fabric of society at large. If risk is negligible or very significant, benefits are not assessed |

| Chemical safety assessment: REACH | Health and environmental risks for single substances. In case of authorization or restriction of a chemical/product, wider impacts of the action can also be evaluated in terms of direct and indirect health, economic, and social impacts and societal

distribution of these impacts (ECHA, 2008b) |

| Environmental impact assessment: YVA | Risks, impacts and benefits to environment, society, and human health according to the characteristics of the assessed project. Different aspects are emphasized in different uses |

| Health impact assessment | Direct and indirect risks, impacts and benefits to human health. Aggregation of health impacts to a single measure advocated |

| Integrated environmental health impact assessment | Multiple benefits, risks, and impacts to human health in the broader context of environment, society, economy, and technology. Indicators for presenting assessment results differ according to the type of assessment and the stakeholders and users involved |

| Open assessment | Any impacts considered relevant in relation to the assessment question. Presentation, aggregation, and comparison according to the intended use(s) |

Unfortunately, this analysis does not have the power to reveal

very much of the details within the dimensions of scope and aggregation

of outcomes of interest. It rather aims to identify and explicate

their nature. However, it may be noted that the means for

aggregating multiple risks and benefits in environmental health

assessment are mostly the same as in any other field concerned

with health, for example DALYs, QALYs (quality adjusted life years)

and monetizing.

Certain patterns are also identified among the analyzed approaches, for example by considering the novelty of approaches and whether they are developed and applied in a regulatory or an academic setting. In the more regulatory approaches, particularly YVA and REACH, the problem framing, question setting and consequently the assessment process are relatively predetermined according to the specific regulatory uses of risk prevention. The more academic approaches, e.g. IRGC risk governance framework and Open assessment, allow for much more flexibility in adapting the question setting and the assessment process according to changing contexts and situations. On the other hand, the flexibility could also be interpreted as vagueness of the approach.

A somewhat similar pattern exists in terms of novelty or traditionalism of the theory bases of the approaches. Traditionalism seems to be more strongly linked with disengaging and novelty with engaging perspectives towards the relationships between assessment and decision making as well as stakeholders and public. There also seems to be a correlation between regulatory approaches and traditionalism as well as academic approaches and novelty. Furthermore, it appears that the more traditional or regulatory an approach is, the more established it is, although this can not be considered a universal rule.

All in all, the most distinctive overall characteristic of contemporary environmental health assessment is plurality, although the weight of establishment seems to be mostly on relatively traditional risk assessment as well as regulatory assessment. However, the characterizations of different approaches to environmental health assessment raise a question whether and to what extent the stated aims of the approaches, the conceptualizations of the approaches, and their practical applications actually meet? In other words, are the assessment purposes fulfilled, and do the approaches provide sufficient means to achieve that, even in theory? For example, in several approaches the evaluation of performance does not seem to consider aspects of using the results of the assessment at all, indicating that these approaches may not try to maximize what is stated as their aims, but possibly something else instead. Somewhat similarly, one may well ask if the approaches which do not explicitly consider intended use in their process descriptions, and which apply models of interaction that do not provide much power for the user side, even seriously strive for their stated purposes. Then again, the more engaging approaches often provide little practical means for achieving the interest, attention and involvement of the user side, and, thereby, for reaching the desired level of engagement. One may also well ask if environmental health assessment professionals truly consider the engagement of users and stakeholders as essential and desired, or whether it is rather seen as an obligatory add-onto a fundamentally expert-driven assessment process? Also, little guidance is usually provided on how to manage assessments with broad scopes and high levels of aggregation across domains, outcomes of interest, and types of phenomena considered in practice.

The overview of the eight approaches above can be considered sufficiently representative to reveal the essential aspects of the complex field of environmental health assessment. However, it could well be reasoned that, for example, nuclear safety assessment as described by the International Atomic Energy Agency (IAEA, 2010), life cycle assessment (LCA) as described by the Scientific Applications International Corporation (SAIC, 2006) for the United States Environmental Protection Agency (U.S. EPA), or the NRC’s risk-based decision-making framework (NRC, 2009) that builds on the Red Book risk assessment and Understanding risk approaches, should have been included in the overview. Their inclusion would have added to the plurality of approaches, probably in terms of all of the attributes of analysis. However, according to the authors’ understanding, it would not have affected the main conclusions, as described below, remarkably.

Conclusions

In several aspects, the theoretical ideals, conceptual means and common practices seem to be quite far from each other in the field of environmental health assessment. This is also acknowledged by Knol (2010) in her characterization of the current status of integrated assessment approaches in environmental health: ‘‘integrated environmental health impact assessment might seem too complex and extensive to carry out [...but] integrated environmental health impact assessment is not extensive and complex enough.’’ The emerging assessment approaches are more complex than the currently established ones. While pursuing for more flexibility, engagement, and wide-ranging integration, the novel and academic approaches may lose the power of detailed guidance that the more narrowly scoped and rigorous regulatory and traditional approaches have. Then again, it appears that even the new integrated approaches are still too simple to effectively analyze and solve many of the current problems.

Despite the challenges in practical application, there are clear tendencies towards (a) increasing engagement between assessment and management as well as stakeholders, (b) pragmatic framing of assessments according to specific and practical policy needs, (c) integration of multiple benefits and risks from multiple domains, and (d) explicit incorporation of both scientific facts and value statements in assessments. These tendencies can be considered as a response to the inherent challenges brought about by the complexity of environmental health assessment, as well as an indication of the incapability of the traditional and established approaches to sufficiently serve the whole range of needs in policy making.

How to address the above issues in assessments in practice is a clear development need for the novel approaches and the field of environmental health assessment as a whole. For example, many means and tools already exist for stakeholder engagement and aggregation of multiple risks and benefits to support decision making. However, they need to be developed as feasible and essential aspects of functional assessment and policy processes. Therefore, an important question in further development is whether an approach is flexible enough to incorporate current and future means and tools required to fulfill the declared aims.

From an outsider’s perspective, the most interesting aspects of environmental health assessment are likely to be found among the emerging approaches. While the traditional and regulatory assessment approaches resemble those common also in other fields of assessment, the above stated aspects of engagement, pragmatism, integration, and explicit inclusion of values can be considered as interesting and innovative particularities of assessment in the field of environmental health.

Conflict of Interest

The authors declare that there are no conflicts of interest.

Acknowledgments