Guidance and methods for indicator selection and specification

This document attempts to explain what is meant with the term indicator in the context of Intarese, defines what indicators are needed for and how indicators can be used in integrated risk assessments. Some of the graphs presented in this text can be found from an Analytica file.

KTL/MNP (E. Kunseler, M. Pohjola, J. Tuomisto, L. van Bree)

Introduction

At this project stage of Intarese (18 months - May 2007), the integrated risk assessment methodology is starting to take its form and is about to become ready for application in policy assessment cases. Within subproject 3, case studies have been selected and development of protocols for case study implementation are in process. The issue frameworks have been formulated and full chain frameworks of the policy assessment case studies are being developed correspondingly. Indicator selection and specification can be used as a bridge from the issue framing phase to actually carrying out the assessment. This guidance document provides information on selecting and specifying indicators and variables and on how to proceed from the issue framing to the full-chain causal network description of the phenomena that are assessed.

There are several different interpretations of the term indicator and several different approaches to using indicators. This document is written in order to clarify the meaning and use of indicators as is seen applicable in the context of integrated risk assessment. This guidance emphasizes causality in the full-chain approach and the applicability of the indicators in relation to the needs of each particular risk assessment case. In the subsequent sections, the term indicator in the context of integrated risk assessment is clarified and an Intarese-approach to indicator selection and specification is suggested.

Integrated risk assessment

Before going any further in discussing indicators and their role in integrated risk assessment, it is necessary to consider some general features of integrated risk assessment. The following text in italics is a slightly adapted excerpt from the Scoping for policy assessments - guidance document by David Briggs [1]:

Integrated risk assessment, as applied in the Intarese project, can be defined as the assessment of risks to human health from environmental stressors based on a whole system approach. It thus endeavours to take account of all the main factors, links, effects and impacts relating to a defined issue or problem, and is deliberately more inclusive (less reductionist) than most traditional risk assessment procedures. Key characteristics of integrated assessment are:

- It is designed to assess complex policy-related issues and problems, in a more comprehensive and inclusive manner than that usually adopted by traditional risk assessment methods

- It takes a full-chain approach – i.e. it explicitly attempts to define and assess all the important links between source and impact, in order to allow the determinants and consequences of risk to be tracked in either direction through the system (from source to impact, or from impact back to source)

- It takes account of the additive, interactive and synergistic effects within this chain and uses assessment methods that allow these to be represented in a consistent and coherent way (i.e. without double-counting or exclusion of significant effects)

- It presents results of the assessment as a linked set of policy-relevant indicators

- It makes the best possible use of the available data and knowledge, whilst recognising the gaps and uncertainties that exist; it presents information on these uncertainties at all points in the chain

Building on what was stated above, some further statements about the essence of integrated assessment can be given:

- Integrated environmental health risk assessment is a process that produces as its product a description of a certain piece of reality

- The descriptions are produced according to the (use) purposes of the product

- The risk assessment product is a description of all the relevant phenomena in relation to the chosen endpoints and their relations as a causal network

- The risk assessment product combines value judgements with the descriptions of physical phenomena

- The basic building block of the description is a variable, i.e. everything is described as variables

- All variables in a causal network description must be causally related to the endpoints of the assessment

Terminology

In order to make the text in the following sections more comprehensible, the main terms and their uses in this document are explained here briefly. Note that the meanings of terms may be overlapping and the catogories are not exclusive. For example a description of a phenomenon, say mortality due to traffic PM2.5, can be simultaneously belong to the categories of variable, endpoint variable and indicator in a aprticular assessment.

Variable

Variable is a description of a particular piece of reality. It can be a description of physical phenomena, e.g. daily average of PM2.5 concentration in Kuopio, or a description of a value judgement, e.g. willingness to pay to avoid lung cancer. Variables (the scopes of variables) can be more general or more specific and hierarchically related, e.g. air pollution (general variable) and daily average of PM2.5 concentration in Kuopio.

Endpoint variable

Endpoint variables are variables that represent phenomena which are outcomes of the assessed causal network. Are endpoint variables always necessarily indicators?

Key variable

Key variable is a variable which is particularly important in carrying out the assessment successfully and/or assessing the endpoints adequately.

Indicator

Indicator is a variable that is particularly important in relation to the interests of the intended users of the assessment output or other stakeholders. Indicators are used as means of effective communication of the assessment results. Communication here refers to conveying information about certain phenomena of interest to the intended target audience of the assessment output, but also to monitoring the statuses of the certain phenomena e.g. in evaluating effectiveness of actions taken to influence that phenomena. In the context of integrated assessment indicators can generally be considered as pieces of information serving the purpose of communicating the most essential aspects of a particular risk assessment to meet the needs of the uses of the assessment. Indicators can be endpoint variables, but also any other variables located anywhere in the causal network.

Proxy

The term indicator is sometimes also (mistakenly) used in the meaning of a proxy. Proxies are used as replacements for the actual objects of interest in a description if adequate information about the actual object of interest is not available. Proxies are indirect representations of the object of interest that ususally have some identified correlation with the actual object of interest. At least within the context of integrated risk assessment as applied in Intarese, proxy and indicator have clearly different meanings and they should not be confused with each other.

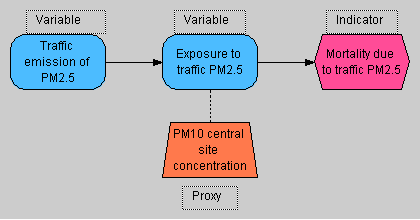

The figure below attempts to clarify the difference between proxies and indicators:

Indicators, proxies and variables.

In the example, a proxy (PM10 site concentration) is used to indirectly represent and replace the actual object of interest (exposure to traffic PM2.5). Mortality due to traffic PM2.5 is identified as a variable of specific interest to be reported to the target audience, i.e. selected as an indicator. The other two nodes in the graph are considered as ordinary variables.

Different approaches to indicators

As mentioned above, there are several different interpretations of and ways to use indicators. The different approaches to indicators have been developed for certain situations with differing needs and thus may be built on very different underlying principles. Some currently existing approaches to indicators are applicable in the context of integrated risk assessment, whereas some do not adequately meet the needs of integrated risk assessment.

In the next section three different currently existing approaches to indicators are presented as examples. Following that a general approach classify indicators is given. The general classification is then used to identify the types of indicators and ways to use indicators that are applicable in different cases of making integrated risk assessments.

Examples of approaches

WHO

One of the most recognized approaches to indicators is the one by the World Health Organization (WHO). The WHO is based on the DPSEEA model and emphasizes that there are important phenomena in each of these steps of the model that can be represented as indicators.

The WHO approach treats indicators as relatively independent objects, i.e. for example exposure indicators are defined independently from the point of view of exposure assessment and health impact indicators are defined independently from the point of view of estimating health impacts. Linkages between different indicators is recognized based on their relative locations within the DPSEEA model, but causality between individual indicators is not explicitly emphasized.

In the WHO approach the indicator definitions are mainly intended as how to estimate this indicator? -type guidances or workplans. The WHO approach can be considered as an attempt to standardize or harmonize the assessments of different types of phenomena within the DPSEEA model.

EEA

The approach to indicators by the European Environmental Agency (EEA) attempts to clarify the use of indicators in different situations for different purposes. EEA has developed a typology of indicators that can be applied for selecting right types of indicator sets to meet the needs of the particular case at hand.

The EEA typology includes the following types of indicators:

- Descriptive indicators (Type A – What is happening to the environment and to humans?)

- Performance indicators (Type B – Does it matter?)

- Efficiency indicators (Type C – Are we improving?)

- Total welfare indicators (Type D – Are we on whole better off?)

The EEA indicator typology is built on the need to use environmental indicators for three major purposes in policy-making:

- To supply information on environmental problems in order to enable policy-makers to value their seriousness

- To support policy development and priority setting by identifying key factors that cause pressure on the environment

- To monitor the effects of policy responses

The EEA approach considers indicators primarily as means of communicating important information about the states of environmental and related phenomena to policy-makers.

RIVM

RIVM has also developed an indicator typology, which is also strongly present in the integrated risk assessment method development work in Intarese. The four indicator sets by RIVM are grouped somewhat similarly as in EEA typology to represent certain kinds of phenomena to give answers to different kinds of environmental and health concerns. However, the RIVM indicator sets are more defined in relation to the audience which the particular indicator set is intended to convey its messages to.

The RIVM indicator sets are called:

- Policy-deficit indicators

- Health impact indicators

- Economic consequence indicators

- Risk perception indicators

In the RIVM approach the different sets of indicators address different phenomena from different perspectives in order to convey the messages about the states of affairs in right forms to the right audiences. By combining the different sets of indicators, a whole view of the assessed phenomena can be created for weighing and appraisal and to support decision making in targeting actions in relation to the assessed phenomena. As in EEA approach, RIVM considers indicators primarily as means for effective communication.

General classification of indicators

So, there are many ways to classify indicators and three of these approaches were presented briefly above and there are several more approaches in addition to these examples. In order to clarify the meanings and uses of indicators, below is a general classification of indicators that attempts to explain all different approaches in one framework by considering three different aspects of different indicator approaches.

Topic-based classification

The topic describes the scientific discipline to which the indicator content mostly belongs to. E.g. the RIVM classification mostly follows this thinking, although the Policy-deficit indicators do not fall within this classification, but within the reference-based classification explained below. The general topic-based classification in integrated risk assessment is:

- Source emission indicators

- Exposure indicators

- Health indicators

- Economic indicators

- Total outcome indicators (may combine source emission, exposure, health, economic issues, e.g. EEA type D indicators)

- Perception indicators (including equity and other ethical issues.)

- Ecological indicators (not fully covered in Intarese, as they are out of the scope of the project.)

The indicators in topic-based classification can be descriptions of endpoints of assessments or intermediate phenomena.

Causality-based classification

Many currently existing and applied approaches to indicators consider and describe independent pieces of information without a context of a causal chain (or full chain approach). WHO indicators are famous examples of this. The causality issue is also further addressed within the next classification. In integrated risk assessment the causality is necessary to be emphasized in all variables, including indicators.

Reference-based classification

The reference is something that the indicator is compared to, and this comparison creates the actual essence of the indicator. The classification according to the reference is independent of the topic-based classification.

| Type of indicator | Point of reference | Examples of use | Causality addressed? |

|---|---|---|---|

| Descriptive indicators | Not explicitly compared to anything. |

|

Possibly, often not |

| Performance indicators | Some predefined policy target |

|

Possibly |

| Efficiency indicators | Compared with the activity or service that causes the impact. |

|

Yes |

| Scenario indicatorsR↻ | Some predefined policy action, usually a policy scenario compared with business as usual. |

|

Yes |

The reference-based classification also covers indicators that can describe endpoints or intermediate phenomena in the causal network of a particular assessment.

Suggested Intarese approach to indicators

In integrated risk assessment as applied in Intarese, the full chain approach is an integral part of the method, and therefore all Intarese indicators should reflect causal connections to relevant variables. According to the full-chain approach, all variables within an integrated risk assessment, and thus also indicators, must be in a causal relation to the endpoints of the assessment. Independently defined indicators, such as WHO indicators and some common uses of descriptive indicators, are thus not applicable as Intarese indicators. In principle, all types in abovementioned classifications of indicators can be used as applicable, as long as causality is explicitly addressed.

It is the communicative needs that fundamentally define the suitable types of indicators for each assessment. Therefore, the right set(s) of indicators can vary significantly between different assessments. The selection of indicators can follow the lines of either topic-based classification of reference-based classification as seen reasonable. Anyhow, the set(s) of indicators should represent, at least in some way:

- The use purpose of the assessment

- The target audience of communication

- The importance of the indicators in relation to the assessment and its use

Indicators can be helpful in constructing and carrying out the integrated risk assessment case. They can be used for targeting efforts to the most relevant aspects of the assessments especially from the point of view of addressing the purpose and targeted users or audiences of the assessments. Indicators can also be used as the backbone of the assessment when creating the causal network description of the assessed phenomena.

Use of indicators in integrated risk assessment

- Intarese: full-chain approach → causality

- brief explanation of causality and causal network description

The Intarese approach to risk assessment emphasizes on creation of causal linkages between the determinants and consequences in the integrated assessment process. The full-chain approach includes interconnected variables and indicators as the leading components of the full-chain description. The full chain variables cover the whole source-impact chain.

The output of an integrated risk assessment is a causal network description of the relevant phenomena related to the endpoints of the assessment, in accordance with the purpose of the assessment. The final description should thus:

- Address all the relevant issues as variables

- Describe the causal relations between the variables

- Explain how the variable result estimates are come up with

- Report the variables of greatest interest as indicators

- general process description from issue framing to causal network description (diagram?)

- purpose: users, use, audience... → endpoints, key vars, inds (name, scope)

- to full-chain - source to impacts → add vars

- causal relations throughout the chain (causalities)

- more detailed definition of vars → refine description (vars&relations)

- coherence of var descriptions? → refine description

- quality of (var, ind & ass) contents? → refine description (methods, causalities, data, ...)

- adequately good causal network description

- rather gradual transition of focus than clearly separate phases

- two tests to help in identifying suitable variable scope and causal relations

In issue framing especially the inclusion and exclusion of phenomena according to their relevance in relation to the endpoints of the assessment and the purpose of the assessment is addressed. Also some understanding of what are the variables of most interest usually exists already in the phase of issue framing. Anyhow, there is still quite a long, and not necessarily at all a straightforward, way from issue framing to a relevantly complete causal network description of the assessed phenomena. The biggest challenges on this way are:

- How are variables defined and described?

- How can the causal relations between variables be defined and described?

- What is the right level of detail in describing variables?

These questions are addressed in following sections. First a general description of the process is given, then some more detailed guidance and description of indicator selection and specification is presented, and eventually a general variable and indicator structure is suggested. The text is supported with an example of an indicator description which can be found in Appendix 2.

After the issue framing phase, there should be a good general level understanding of what is to be included in the assessment and what is to be excluded. Also, there should a strong enough basis for identifying what are the endpoints of the assessment, what are the most important variables within the assessment scope in order to carry out the assessment succesfully, and what are the most interesting variables within the assessment scope from the point of view of the users and other audiences of the assessment output. These overlapping sets of endpoint variables, key variables and indicators provide a good starting point taking the first steps in carrying out the assessment after the issue framing phase.

Having been identified, the endpoint variables, key variables and indicators can be located on the source-impact chain of full-chain approach. This forms the backbone of the causal network description. Because all of the variables must be causally interconnected, the linkages between the causal relations between the key variables and indicators need to be defined. Additional variables are added to the description as needed to create the causal relations across the network that interconnect all the key variables and indicators to the endpoint variables and to cover the whole chain from sources to impacts. This happens as an iterative process of selecting, specifying, re-selecting and re-specifying the key variables, indicators and other variables. Selection and specification of indicators and other variables are explained in more detail in the following sections.

The process typically proceeds from more general level, e.g. from general full-chain description, to a more detailed level as is reasonable for the particular assessment. Throughout the full chain, causality can be improved as seen necessary by e.g. combining detailed variables into more general variables, dividing general level variables into more detailed ones, adding necessary variables to the chain, removing variables that turn out irrelevant, changing the causal links etc. As an example, the general air pollutant variable can be divided into specific pollutant variables, e.g. for NOx, PM2.5 /PM10, BS etc and also further specified in terms of limitations in relation to e.g. time and space as needed in the particular assessment. Each of these specific variables naturally then have different causal relations to the consequent health effect variables. In practice, the level of detail might need to be iteratively adjusted also to meet e.g. data availability and existing understanding of causal relations between variables. Within Intarese there are plans to create a scoping tool as a part of the toolbox to help the creation of the causal network description, but unfortunately this tool is non-existent as yet.

When iteratively developing the causal network description, there are two critical tests that can be used in determining the causal relations between variables and the right scope for individual variables:

- clairvoyant test for variables

- causality test for variable relations

All variables in a proper full-chain description should pass both of these tests.

Variables should describe some real-world entities, preferably described in a way that they pass a so called clairvoyant test. The clairvoyant test determines the clarity of a variable. When a question is stated in such a precise way that a putative clairvoyant can give an exact and unambiguous answer, the question is said to pass the test. So in case of a variable, the scope of the variable should be defined so that a clairvoyant, if one existed, could give an exact and unambiguous answer to what is its result.

All variables should also be related to each causally. Causality test determines the nature of the relation between two variables. If you alter the value of a particular variable (all else being equal), the variables downstream (i.e., the variables that are expected to be causally affected by the tested variable) should change. If no change is observed, the causal link does not exist. If the change appears unreasonable, the causal link may be different than originally stated.

The idea behind the indicator selection, specification and use is to highlight the most important and/or significant parts of the source-impact chain which are to be assessed and subsequently reported.

As a result of issue framing, the main nodes and links in the source - impact chain stand out. Key variables can be selected as indicators. Selected indicators should be internally coherent – i.e. they should have clear and definable relationships within the context of the chain. Indicator selection provides the bridge between the issue framework and the assessment process. During the process of selection and specification, variables and indicators are subject to iterative improvement. The variable and indicator specifications, in particular their outcome values and causal relations to connecting variables or indicators are iteratively improved throughout the course of the assessment process as the knowledge and understanding increases. It might also turn out that the indentified indicator is not able to properly cover the step in the assessment process which it should be reporting, as is defined in the indicator purpose and scope. Consequently, a different indicator should be chosen amongst the assessment variables.

- role of indicators?

- redefined selection & specification

- indicators: the leading variables in carrying out the assessment → the essence of the assessment

- criteria for evaluating indicator goodness (instead of selection criteria)

- in principle the same as all variables

- helps to guide the assessment to meet its purposes (if purpose properly defined)

- predefined indicator sets can be useful and helpful

- efficiency, comparability, usability etc.

When presented with a list of indicators it is often not clear why specific indicators were chosen. Individual interests and organization priorities will influence the indicator selections. Familiar measures are more likely to be identified and there is a natural tendency towards indicators that are consistent with expectations.

A comprehensive selection process is important to document why individual specific indicators are selected. The process should be objective and the choice of indicators appropriate and useful. In Intarese we propose to follow the steps of the table below.

During the selection process, we have to make use of selection criteria, which are important tools in helping to define the relevant dimensions of the indicator and to asssess how well the indicator actually measures the phenomenon of interest. The set of selection criteria should be relevant to the project. Different sets of selection criteria are in use. In this section, we propose an Intarese set of selection criteria, which is based on the qualitative WHO and EEA approach combined with an alternative quantitative approach that uses a scoring and weighting framework: indicators are selected on basis of how well they meet criteria with the criteria being weighted to reflect their relative importance in meeting project objectives and reaching the target audience, as is explained in the section Intarese approach to indicators

In principle, any variable could be chosen as an indicator and the set of indicators could be composed of any types that cover the steps in the full-chain description (see section Intarese approach to indicators). In practice, the generally relevant types of indicators, such as performance indicators can be somewhat predefined and even some detailed indicators can be defined in relation to commonly existing purposes and user needs. This kind of generality is also helpful in bringing coherence between the assessments. We suggest that all variables, and thus also all indicators, are specified using a fixed set of attributes. The reasoning behind is to secure coherence between variable/indicator specifications and to enhance efficiency of assessment work and re-usability of the outputs of assessment work. Moreover, it helps in ensuring that all the terms used in the assessment are consistent and explicit. Specification of attributes evolves during the selection process.

Key variables in the issue framework and Intarese types of indicators should be considered in preparing a list of potential indicators (step 3). Name, scope and causal relationship of the potential indicators should be further refined and further specified with description, unit and definition attributes. Indicator methods do not have to be very detailed at this stage. It is likely that many indicators available appear to be relevant. Developing a detailed understanding of data issues and calculation methods for all of these indicators takes a lot of time. Instead, the list of potential indicators should be assembled based on the relevance to the scope of the project. Step 4 is a critical phase. Criteria definitions should be discussed, adjusted as required and clearly understood by participants.

The table below defines recommended criteria and definitions for health planning indicators. File:Indicatorcriteria.PNG

Some criteria are more important tahn others for a specific proejct. This can be incorporated in the selection process by assigning criteria weights. High weights can be assigned to important criteria, and lower weights to those that are less critical. Of key importance is the criterion of validity: indicators must be based on known and validated processes or principles and hold scientific credibility. Contents validity refers to the indicator coverage of the assessment purpose, while criterion validity requires indicators to have predictive power. Include and describe other criteria

Criteria weights have large impact on indicator scores. A target number of indicators should be set, but the ranking scores will ultimately determine the number of indicators.

Structure of variables/indicators

- why unified structure?

- efficiency

- physical reality objects & value judgements can both be described as variables

- control of hierarchical information structures (→collective sructured learning)

- attributes of variables

- attributes explained

- reference to example in appendix (to be created)

- Name

- Scope

- Description

- Scale

- Averaging period

- References

- Unit

- Definition

- Causality

- Data

- Formula

- Variations and alternatives

- Result

- Discussion

In appendix 2 you find the Intarese issue framework of the WP3.1 Transport congestion charge case study. Name, scope and causal relationship should be attributed to each variable, covering step 1 and 2 of the selection process.

References

Appendices

Appendix 1. Types of indicators applicable to Intarese

[The text in this section has been taken from the EEA Technical report No 25, Environmental indicators: Typology and overview, EEA, Copenhagen,1999)].

Performance indicators (EEA Type B and RIVM/MNP policy deficit indicators)

Performance indicators compare (f)actual conditions with a specific set of reference conditions. They measure the ‘distance(s)’ between the current environmental situation and the desired situation (target): ‘distance to target’ assessment. Performance indicators are relevant if specific groups or institutions may be held accountable for changes in environmental pressures or states.

Most countries and international bodies currently develop performance indicators for monitoring their progress towards environmental targets. These performance indicators may refer to different kind of reference conditions/values, such as:

• national policy targets; • international policy targets, accepted by governments; • tentative approximations of sustainability levels.

The first and second type of reference conditions, the national policy targets and the internationally agreed targets, rarely reflect sustainability considerations as they are often compromises reached through (international) negotiation and subject to periodic review and modification. Up to now, only very limited experience exists with so-called sustainability indicators that relate to target levels of environmental quality set from the perspective of sustainable development (Sustainable Reference Values, or SRVs).

Performance indicators monitor the effect of policy measures. They indicate whether or not targets will be met, and communicate the need for additional measures.

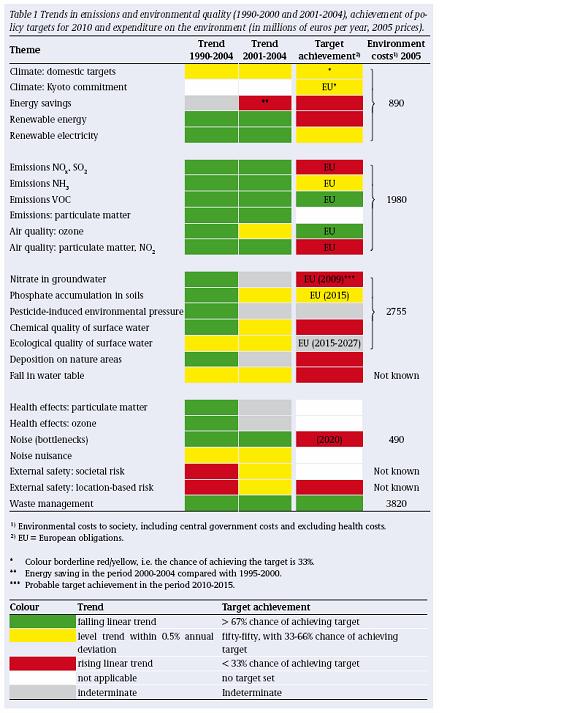

MNP example on policy deficit indicators

To illustrate policy deficit indicators used in various environmental themes, an example has been taken from the (annual) Environmental Balance report (2006) of the Netherlands Environmental Assessment Agency visualizing a simple table format, using different colours to show what the time trends and target achievements are.

Figure 4 MNP example of policy deficit indicators

Figure 4 MNP example of policy deficit indicators

Efficiency indicators (EEA Type C)

It is important to note that some indicators express the relation between separate elements of the causal chain. Most relevant for policy-making are the indicators that relate environmental pressures to human activities. These indicators provide insight in the efficiency of products and processes. Efficiency in terms of the resources used, the emissions and waste generated per unit of desired output.

The environmental efficiency of a nation may be described in terms of the level of emissions and waste generated per unit of GDP. The energy efficiency of cars may be described as the volume of fuel used per person per mile travelled. Apart from efficiency indicators dealing with one variable only, also aggregated efficiency indicators have been constructed. The best-known aggregated efficiency indicator is the MIPS-indicator (not covered in this report). It is used to express the Material Intensity Per Service unit and is very useful to compare the efficiency of the various ways of performing a similar function.

Efficiency indicators present information that is important both from the environmental and the economic point of view. ‘Do more with less’ is not only a slogan of environmentalists. It is also a challenge to governments, industries and researchers to develop technologies that radically reduce the level of environmental and economic resources needed for performing societal functions. Since the world population is expected to grow substantially during the next decades, raising environmental efficiency may be the only option for preventing depletion of natural resources and controlling the level of pollution.

The relevance of these and other efficiency indicators is that they reflect whether or not society is improving the quality of its products and processes in terms of resources, emissions and waste per unit output.

Scenario indicators

Insert text

WHO and EEA selection criteria

When selecting indicators in the source - impact chain in WHO and EEA projects, the policy context or commonly recognised issues are the main drive for indicator selection. WHO has for example developed children's environmental health indicators which measure the implementation of CEHAPE priority goals. (WHO, ENHIS project) Subsidiarity is important as well; information need to be collected at the most relevant level or for specific policy/management purposes. Detailed indicators for local level or specific purposes might feed into broader (core) indicators that can be used at higher policy level or for general public information. Moreover, the indicators need to be associated with a suite of methods to derive them and with methods and approaches to link the indicators across the causal chain. Also incorporation of available information from monitoring and surveillance systems on environmental stressors and health provide selection criteria for indicator development. (WHO, 2002 and Lebret E & Knol A, 2007)

Besides these principal criteria for indicator selection, which can be summarized as (i) relevance to users and acceptability, (ii) consistency, (iii) measurability there are several other issues to be taken into consideration. Indicators must be based on known and validated processes or principles; scientific credibility. Sensitivity and robustness are a precondition for indicators, since a change must be responded to while slight variations should be coped with. Moreover, the indicator must be understandable and user-friendly. (Briggs D, 2006)

WHO has selected as set of environmental health indicators based on these criteria and expert judgements, see http://www.euro.who.int/EHindicators. A protocol for pilot testing the indicators was formulated to come to a further selection of core and extended sets of indicators. Proposed indicators were screened by a group of experts in terms of their credibility, basic information on the definition, calculation method, interpretation and potential data sources. During the screening process a template for a methodology sheet was designed entailing the attributes that are summarized in the Appendix. During development of the methodology sheets, further consultation with national and international experts and international agencies as well as national ministries and agnecies and holders of environmental and health data was conducted. During development of the methodology sheets it became apparent that for some indicators insufficient data was available to continue development. Indicators were defined in the core set once their relevance for policy and availability of the data was confirmed. Indicators which were deemed policy-relevant but for which data is currently not available were included in the extended set of indicators for future development and use. In succession to the second selection round, the methodology sheets were further refined. Three major tasks were 1 - Development of a specific technical definition; 2 - Elaboration of a computation method for each indicator; 3 - Check-up of data availability in international sources. The process of development and adjustment of the methodology sheets served as a pre-screening process to determine the need for testing the indicators. Only core indicators were further considered, while adopted indicators and indicators with readily available data from international databases did not require feasibility testing. Other core indicators underwent a screening process to test the feasibility and applicability of the indicators. (WHO ENHIS final technical report, 2005)

Appendix 2. Example of Intarese indicator selection and specification

Appendix 3. Comparison of different variable/indicator structures

Table. A comparison of attributes used in Intarese (suggestions), ENHIS indicators, pyrkilo method, and David's earlier version.

| Suggested Intarese attributes | WHO indicator attributes | Pyrkilo variable attributes | David's variable attributes |

|---|---|---|---|

| Name | Name | Name | Name |

| Scope | Issue | Scope | Detailed definition |

| Description | Definition, description and interpretation | Description (part of) | - |

| Description / Scale | Scale | Scope or Description | Geographical scale |

| Description / Averaging period | - | Scope or description | Averaging period |

| Description / References | Linkage to other indicators | Description (part of) | - R↻ |

| Unit | Units | Unit | Units of measurement |

| Definition / Causality | Not relevant | Definition / Causality | Links to other variables |

| Definition / Data R↻ | Data sources or Related data | Definition / Data | Data sources, availability and quality |

| Definition / Formula | Computation | Definition / Formula | Computation algorithm/model |

| Result (a very first draft of it) R↻ | Not a specific attribute | Result (a very first draft of it) | Worked example |

| Discussion | - | - | - |

| - | - | - | Type |

| - | - | - | Terms and concepts |

| - | Specification of data needed | - | Data needs |

| - | Justification | - | - |

| - | Policy context | - | - |

| - | Reporting obligations | - | - |